A native macOS voice assistant in the spirit of Iron Man’s Jarvis — lives in the menu bar, hands-free voice loop, actually controls the Mac (open apps, type text, click mouse, drive Safari/Chrome tabs), and searches a personal RAG knowledge base.

Designed for real agentic flow: say “Open Safari and search Facebook” → Jarvis opens Safari → focuses URL bar → types → presses Enter. No hallucinated success.

At a glance

- Native Swift binary ~9MB, full DMG ~50MB — vs the Electron prototype at 1.5GB (170× lighter)

- 40 tools registered: Mac control (6) + browser Safari/Chrome (15) + input/keyboard/mouse (10) + KB search (2) + weather/maps (4) + on-device vision (placeholder)

- 3 switchable LLM backends: Cloud Haiku ($0.001/turn), Local Hermes 3 8B (free), Apple Foundation Models (macOS 26+)

- End-to-end voice loop <2s with Cloud Haiku: STT (~300ms) → LLM (~800ms) → TTS playback

- Continuous mode — auto-listens after each reply, interrupted by saying “stop” / “wait” / “cancel”

- Dictation hotkey Cmd+Shift+D — types into any focused field (replaces Apple Dictation, uses on-device SFSpeech)

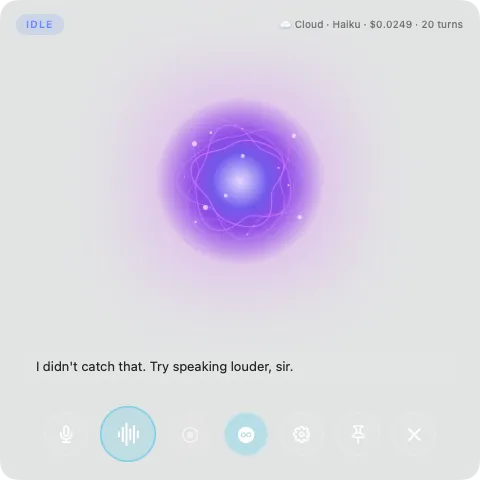

- Custom Siri-class visualizer — SwiftUI Canvas-based noise-displaced sphere, 3 styles switchable (Orb / Particles / Wave Rings)

- Privacy posture: audio + STT + KB + tool execution stay 100% on-device; only the transcript text leaves the device when Cloud LLM is selected

Stack

Swift 6.0 · SwiftUI · AVAudioEngine · SFSpeechRecognizer (on-device) · AVSpeechSynthesizer (Premium voices) · Anthropic Claude Haiku 4.5 · Ollama (Hermes 3 / Qwen) · Apple Foundation Models (macOS 26+) · NSStatusItem + NSPanel · Carbon RegisterEventHotKey · AXUIElement + CGEvent + NSAppleScript · Keychain Services

UX flow

Push-to-talk turn:

- User presses Cmd+Shift+J (global hotkey) or clicks the 🎤 button

- Floating panel appears, visualizer turns cyan (LISTENING)

- User speaks the command

- Energy-based VAD detects end-of-speech (700ms silence) → SFSpeech finalizes

- Visualizer turns amber (THINKING) — Claude Haiku invokes the tool sequence

- Visualizer turns green (SPEAKING) — AVSpeechSynthesizer plays the reply through a Premium voice (Ava/Zoe/Evan)

- Returns to IDLE; panel hides if user clicks ✕

Continuous mode: toggle the ♾️ icon → after Jarvis finishes speaking, listening auto-restarts after 400ms (delay to avoid feedback). Interrupt by saying “stop” or clicking ⏹.

Dictation: focus any text field → Cmd+Shift+D → speak the sentence → AX-paste inserts text directly (no clipboard pollution).

Architecture

┌─ Jarvis.app (Swift native, ~9MB) ──────────────────────────┐

│ │

│ StatusBarController (NSStatusItem) │

│ │ │

│ ▼ │

│ FloatingPanel (NSPanel translucent + NSHostingView) │

│ │ │

│ ┌──────┴───────────────────────────────────────────────┐ │

│ │ SwiftUI: VisualizerView + Transcript + Controls │ │

│ │ - JarvisOrb (Canvas multi-octave noise) │ │

│ │ - ParticlesViz / WaveRingsViz alternatives │ │

│ └───────────────────────────────────────────────────────┘ │

│ │

│ ┌─ Voice loop ──────────────────────────────────────────┐ │

│ │ AudioEngine → SpeechRecognizer (SFSpeech) → ... │ │

│ │ → ConversationEngine → LLMProvider │ │

│ │ → TTSEngine (AVSpeechSynthesizer) │ │

│ └───────────────────────────────────────────────────────┘ │

│ │

│ ┌─ ToolRegistry (40 tools, async actor) ────────────────┐ │

│ │ MacTools · InputTools · BrowserTools │ │

│ │ KBTools · LocationTools │ │

│ └───────────────────────────────────────────────────────┘ │

│ │

│ ┌─ LLMProvider protocol ────────────────────────────────┐ │

│ │ ClaudeProvider (URLSession + prompt caching) │ │

│ │ OllamaProvider (Hermes 3 / Qwen) │ │

│ │ FoundationModelsProvider (macOS 26+) │ │

│ └───────────────────────────────────────────────────────┘ │

└─────────────────────────────────────────────────────────────┘

External:

personal-rag-kb HTTP server :8080 (RAG over ~/Documents/KB/)

Ollama daemon :11434 (local LLM)No Python sidecar. Pure Swift binary. The KB server is just a separate HTTP endpoint the user runs.

Decision log

1. Native Swift over Electron

Started the prototype in Electron (Three.js visualizer + Python backend) — worked, but shipped a 1.5GB bundle. Rewrote native Swift over a weekend — 50MB, native macOS feel, no Python deployment headache.

2. Cloud Haiku is the default; Local is a fallback

Tested Qwen 2.5:7b and Hermes 3:8b locally for agentic tool-use. Both failed at multi-step chains (open_app + type_text + press_key). They confidently hallucinated “I typed Hello” without ever calling the tool. Switching to Claude Haiku → 100% accuracy at $0.001/turn.

→ Default Cloud, Local for privacy mode or chit-chat. Details: Local vs Cloud LLM blog post

3. Push-to-talk over wake word for V1

Always-listening “Hey Jarvis” is sexy in demos but in production:

- Battery drain 15–25% per day

- 2–5 false triggers per day

- Privacy concerns

V1 ships push-to-talk Cmd+Shift+J. Wake word ships in V1.5 with an openWakeWord local model.

4. SFSpeech over WhisperKit for V1

Whisper (via WhisperKit on the Neural Engine) has higher accuracy but:

- ~150MB model download on first run

- 5–8s cold start

- Cache management overhead

SFSpeech is on-device, free, instant, and accurate enough (90%+) for a voice agent. Will upgrade to WhisperKit in V2 if multi-language is needed.

5. Custom Canvas visualizer over metasidd/Orb

Tried the metasidd/Orb Swift package — pretty, but iOS-only (UIKit). Built a custom SwiftUI Canvas implementation: multi-octave noise sphere + sparkle particles + Fresnel halo glow. State-driven palette (idle blue → listening cyan → thinking amber → speaking green).

3 visualizer styles selectable by the user: Orb (default), Particles, Wave Rings.

Numbers

- Bundle: 9MB binary, 50MB DMG (vs Electron at 1.5GB)

- RAM: ~80MB resident steady-state, ~200MB during a turn

- Cost with Cloud Haiku + prompt caching: ~$0.001/turn × 30 turns/day = ~$1/month

- Latency end-to-end voice loop: ~1.5–2s (Cloud) / ~3–4s (Local)

- Tool count: 40 (Mac / Browser / Input / KB / Location)

Documentation

| Doc | Read this for |

|---|---|

| Blog post | Decision framework for product/CTO teams choosing between local and cloud LLM |

Out of scope V1 / roadmap V1.5+

- Vietnamese voice support (viXTTS or ElevenLabs Multilingual)

- User-specific voice cloning (F5-TTS)

- Always-on “Hey Jarvis” wake word

- Hybrid intent router (lightweight classifier routing Local vs Cloud)

- Persistent cross-session conversation memory (currently per-session)

- Apple Developer ID signing + notarization → ship a clean public DMG

- Computer-use vision (Anthropic Computer Use API or native screenshot+Claude vision)